Power Virtual Agents

This no-code chat bot builder has changed the market landscape for its ease of use. Its NPS score is the highest on the platform all while breaking ground technologically through a steady release cadence of AI features. I’m most proud of the work I have done on the foundational authoring experience as well as the product’s major AI features. In the work sample below I will be sharing the end-to-end design of the “model confusion” feature.

“We love that Power Virtual Agents doesn’t require a developer to add and update content. … Employees from each part of the business can continually decide how the bot should answer questions related to their function.”

Kim Rometo: Vice President and Chief Information Officer

Miami Dolphins and Hard Rock Stadium

Role: UX Design

Design Team size: 6 UX designers, 1 Content design, 1 Design Research, and 1 Experience analytics

Time on project: 2 years

Areas of ownership: Owner of core authoring, AI-powered features and accessibility.

The Devs, PM and I after successfully closing all our accessibility bugs before GA

Sample feature: Topic Confusion

How can we leverage our AI capabilities to determine semantically similar trigger phrases and help the user to resolve these problematic instances?

Timeline: Four weeks for Part 1, six for Part 2

Role: Lead design

Design team members: one supporting ux designer, one content designer and one design researcher

The users

A key distinction for our product and this feature is that our core users do not code. Our product is designed with the understanding that our users have no framework for developer concepts and no experience with data-science or AI. Instead, they are content writers with novice or no bot authoring experience, bot authors with experience writing dialogues, and a few cases IT administrators.

Product context

In Power Virtual Agents a user is likely to have only one or two bots (or for very large customers maybe 3 or 4). Within each bot different possible conversations are called topics. Smaller businesses have tens to hundreds of topics while larger operations have thousands.

These topics are initiated by trigger phrases. For example, a chat bot end user might say “Hi I need help with a return”. Our system then uses our AI model to match this message to a corresponding topic and subsequently initiate the appropriate conversation.

When the bot is not able to find a confident match to the user’s message one of the fall back behaviours is to ask a “did you mean” question. When you take a look more closely at why there is no clear match it is because there is one of two things happening. Two topics have been written in such a way that they are overlapping in meaning or there is a gap in the content and the user is asking for something not yet authored.

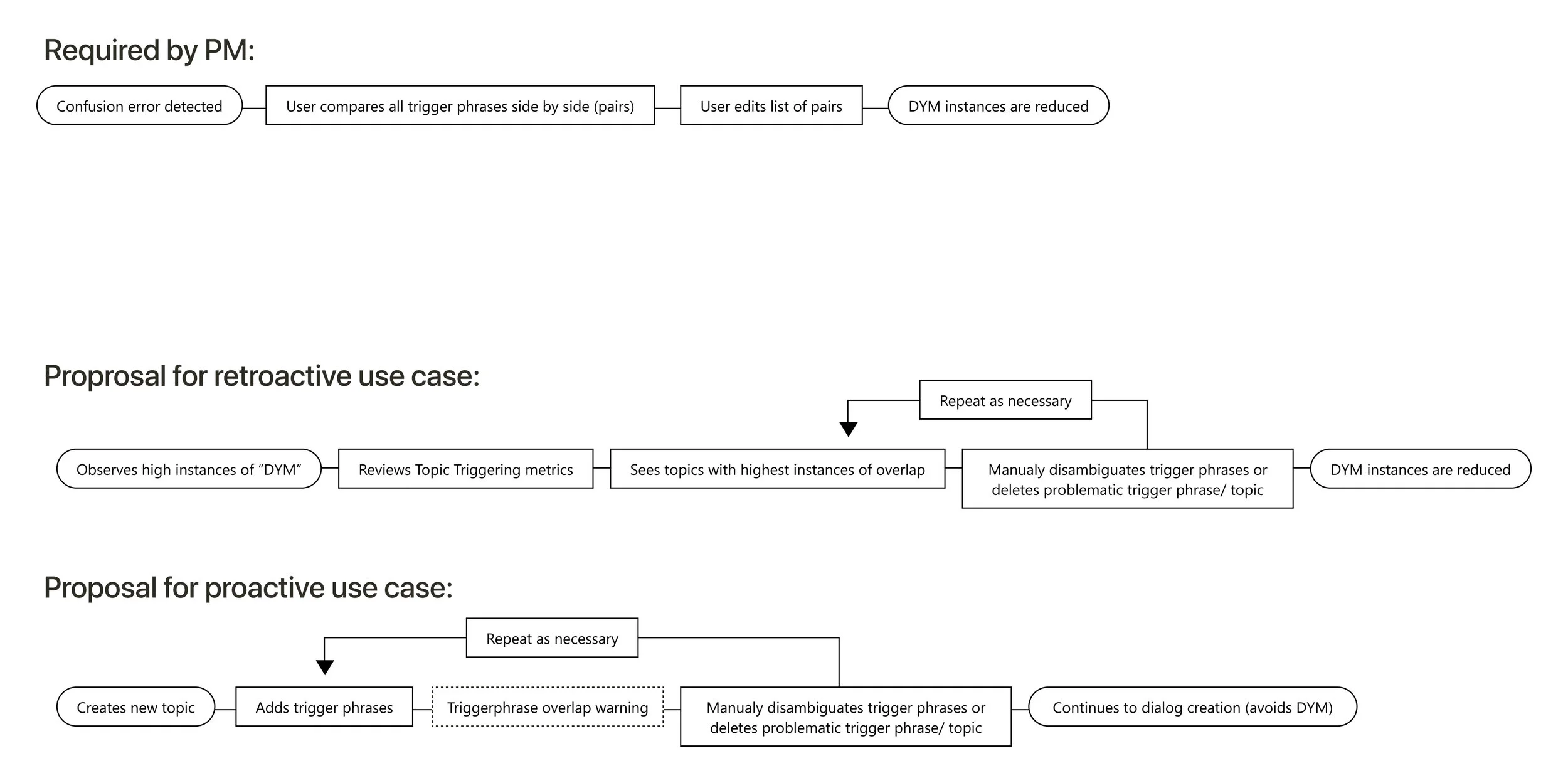

Building on PM requirements

High level IA maintenance

In order to provide our user’s with the means to resolve this common issue we would need to take an integrated approach, meeting them where they were in their workflow. This lead me to capture some existing concerns I’d had around the product IA and to explore how this feature could resolve some of these issues above and beyond what was asked of this feature. This feature needed to work as part of a broader eco-system and that eco-system had long been neglected up to this point. My recomendations were primarily focused on making our left nav more task focused. The user should be able to navigate the app based on their evolving needs as they grow a bot. The current left nav was a collection of features that had been added over the past few years and did not represent the users needs.

From a spreadsheet to an experience

While remaining focused on the timeline it was obvious a proactive solution would be vital to the user’s success as opposed to a solely retroactive review process as originally proposed by pm (original proposal was to replicate an excel experience/ table view). I created initial designs, reviewed with my design team, the broader PM team, and some of our customers. From there we converged on a design we could user test.

User testing take-aways

Additional links to documentation with supporting information regarding data security would be helpful in improving trust scores (which were otherwise positive).

Based on interviews, the terminology needed some updates and additional descriptors. Original terminology explorations were not clearly directive or explicit around the issue of overlapping triggers.

Overall, the users understood and appreciated the proactive warnings and were successful in completing the task.

Final designs for all feature stages

-

Phase 1

The initial scope of work targeted giving users real time feedback. This work alerted users to the existance of conflicting topics as they created new ones.

-

Phase 2

With this update users can prioritise and resolve the full spectrum of triggering errors. This UI supports bots improvement at scale.

-

Phase 3

Final implementation designs include contextual analytics. This gives the user a braoder understanding of their bot’s triggering and how to improve it’s engagement with end users.

Outcomes

For several complex reasons this feature set ended up being the only improvements shipped during that semester. This work was vital in unblocking several of our MVP customers and setup our new users for success when building comprehensive bots regardless of size.

The work was heavily featured at ignite by both the product team and high level organizational leaders.

We are eagerly awaiting quantitative data insights as the work moves from private to public availability.